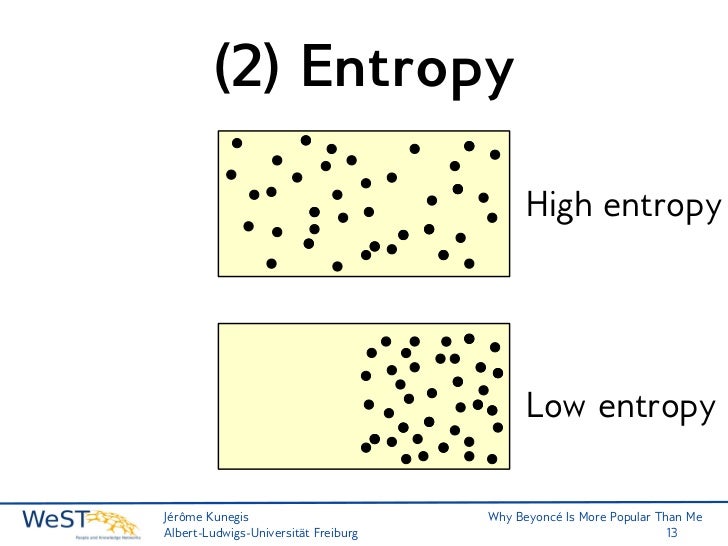

To this day, no other encryption scheme is known to be unbreakable. Shannon's contribution was to prove rigorously that this code was unbreakable. The catch is that one needs a random key that is as long as the message to be encoded and one must never use any of the keys twice. The idea is to encode the message with a random series of digits-the key-so that the encoded message is itself completely random. (He did this work in 1945, but at that time it was classified.) The scheme is called the one-time pad or the Vernam cypher, after Gilbert Vernam, who had invented it near the end of World War I. A major accomplishment of quantum-information scientists has been the development of techniques to correct errors introduced in quantum information and to determine just how much can be done with a noisy quantum communications channel or with entangled quantum bits (qubits) whose entanglement has been partially degraded by noise.Ī year after he founded and launched information theory, Shannon published a paper that proved that unbreakable cryptography was possible. Today everything from modems to music CDs rely on error-correction to function. Shannon demonstrated mathematically that even in a noisy channel with a low bandwidth, essentially perfect, error-free communication could be achieved by keeping the transmission rate within the channel's bandwidth and by using error-correcting schemes: the transmission of additional bits that would enable the data to be extracted from the noise-ridden signal. Today we call that the bandwidth of the channel. He found that a channel had a certain maximum transmission rate that could not be exceeded. His paper may have been the first to use the word "bit," short for binary digit.Īs well as defining information, Shannon analyzed the ability to send information through a communications channel. In 1948, at the very dawn of the information age, this digitizing of information of any sort was a revolutionary step. Today that sounds like a simple, even obvious way to define how much information is in a message. In its most basic terms, Shannon's informational entropy is the number of binary digits required to encode a message. Shannon defined the quantity of information produced by a source-for example, the quantity in a message-by a formula similar to the equation that defines thermodynamic entropy in physics. In 1948 this work emerged in a celebrated paper published in two parts in Bell Labs's research journal. Unknown to those around him, he was also working on the theory behind information and communications. in mathematics under his belt, Shannon went to Bell Labs, where he worked on war-related matters, including cryptography. This most fundamental feature of digital computers' design-the representation of "true" and "false" and "0" and "1" as open or closed switches, and the use of electronic logic gates to make decisions and to carry out arithmetic-can be traced back to the insights in Shannon's thesis. master's thesis in electrical engineering has been called the most important of the 20th century: in it the 22-year-old Shannon showed how the logical algebra of 19th-century mathematician George Boole could be implemented using electronic circuits of relays and switches.

He graduated from the University of Michigan with degrees in electrical engineering and mathematics in 1936 and went to M.I.T., where he worked under computer pioneer Vannevar Bush on an analog computer called the differential analyzer. Among other inventive endeavors, as a youth he built a telegraph from his house to a friend's out of fencing wire. Shannon was born in 1916 in Petoskey, Michigan, the son of a judge and a teacher. What had been viewed as quite distinct modes of communication-the telegraph, telephone, radio and television-were unified in a single framework. In a landmark paper written at Bell Labs in 1948, Shannon defined in mathematical terms what information is and how it can be transmitted in the face of noise. Classical information science, by contrast, sprang forth about 50 years ago, from the work of one remarkable man: Claude E.

Quantum information science is a young field, its underpinnings still being laid by a large number of researchers.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed